Color Management Resources

The Age of HDR has Arrived - Here's What it Means for Everything from Your TV to Your iPhone

The new technology that has been drawing attention in the field of imaging as the next step beyond 4K is “HDR,” or high dynamic range. While it's described as having an “incredible sense of realism,” what's so impressive about it in the first place? How is it different from HDR in photography? And what do you need in order to enjoy HDR content?

This is a translation from Japanese of an article published by ITmedia on August 22, 2017. Copyright 2017 ITmedia Inc. All Rights Reserved.

What is This New HDR (High Dynamic Range) Technology?

|

| ColorEdge PROMINENCE CG3145 HDR reference monitor |

In the last few years, the term HDR has been everywhere in imaging-related fields. HDR stands for High Dynamic Range. You may have seen it mentioned as a selling point on a TV at your local home electronics shop — what it means is that that TV can display a wider dynamic range than traditional TVs.

When you hear HDR and you're into photography, you might find yourself thinking, “Oh, so it's like HDR photos.” It's true that the word HDR has come into common use as smartphones like the iPhone have added the ability to easily take HDR photos, and even if you aren't really into photography, the odds are good that you have at least a passing familiarity with this. But the thing is, the way HDR photography works is a completely different approach from how modern video equipment works.

The Difference between HDR Photos and HDR Video

|

| What's so different from HDR mode on an iPhone? |

Dynamic range refers to the difference (or range) between the darkest and the brightest parts of an image that a device can reproduce, while still retaining the ability to discern different tones. For example, if you take a picture indoors so that you can see what's inside the room, the sunlit outdoor scenery visible through the windows will be overexposed and washed out. On the other hand, if the picture is exposed for the lighting outdoors, the inside of the room will be way too dark. The odds are good that you've experienced this for yourself at some point.

The reason this happens is because there is too much of a difference between the brightest part (the sunlight coming through the window) and the darkest part (inside the room). In this case, suppose that we could expand the dynamic range so that the brightest parts aren't washed out and completely white, and the darkest parts aren't crushed and totally black. The wider you make this range, the more realistic the image looks, because it begins to approach the range that our eyes can perceive.

In fact, the dynamic range of the real world is incredibly wide. For example, if you look directly at the sun from the surface of the earth, it has a brightness of about 1.6 billion candela per square meter (cd/m²), while the level of brightness considered ordinary for outdoors is about 2,000 cd/m². The dynamic range that humans can discern is limited to about 30% of what exists in the natural world (but can be expanded further through adjustments in the pupil), but even then it's quite a range.

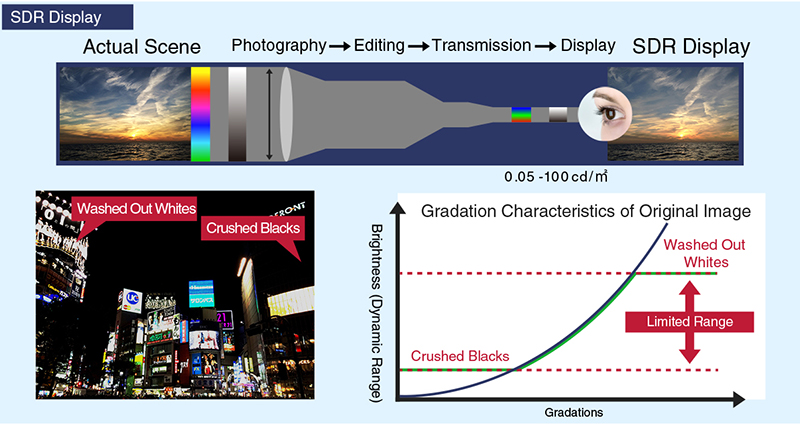

On the other hand, input devices like cameras are designed and built based on old dynamic range standards from the CRT era (now known as Standard Dynamic Range, or SDR), and these SDR devices can only reproduce a narrow range of brightness from 0.05 to 100 cd/m². In other words, the SDR image standards up to now can only portray a tiny slice of what the human eye can perceive.

|

| The dynamic range of the existing SDR standards is limited to 0.05 to 100 cd/m². As a result, details are lost to washed-out whites and crushed blacks. This is because this content was produced based on the assumption that it would be displayed on a CRT display. |

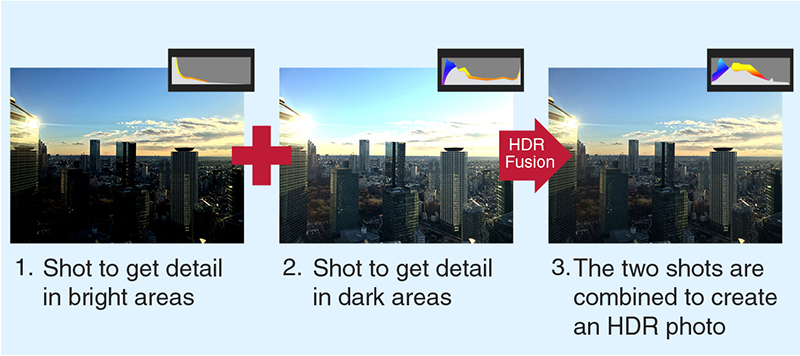

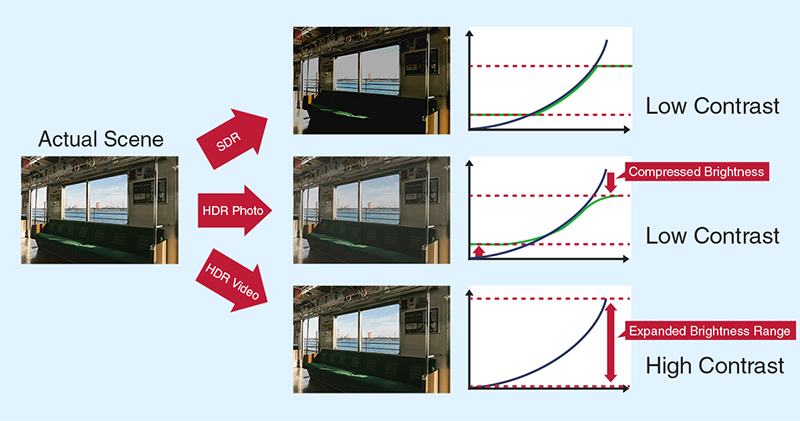

The HDR photo mode included in the iPhone and other smartphones uses data from multiple photo exposures (one shot to get detail in the dark areas and one shot to get detail in the bright areas), combining them to compress the range of brightness by using the properly exposed portions of each. This prevents the different tones from being lost outright, and provides an effect more similar to viewing the image directly with the human eye. Unfortunately, this compression of tones still has to fall within the realm of SDR, so while it might prevent the brightest and darkest parts from losing detail, it still doesn't expand the actual dynamic range of the image itself.

|

| The HDR mode in smartphones combines multiple photos exposed at different levels to create an HDR photo while remaining within SDR dynamic range. |

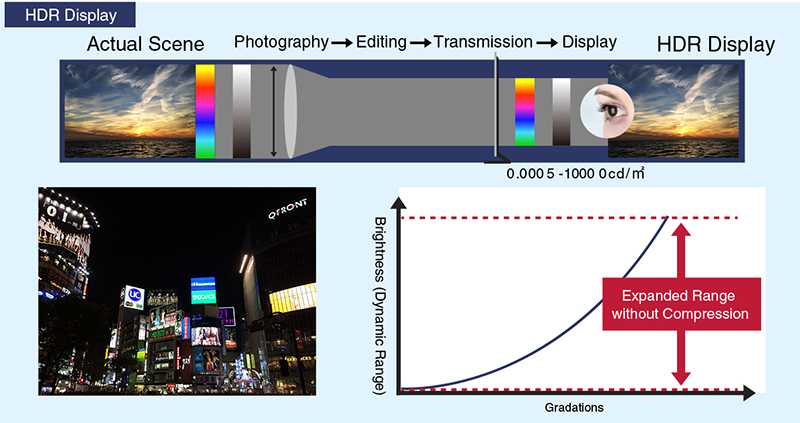

Conversely, the HDR in modern video equipment refers to the ability to reproduce a much-expanded range of brightness from 0.0005 to 10,000 cd/m², without the need for compression. Or, as a simple calculation will show, 100 times the brightness of SDR. Of course, HDR-compatible TVs on the market today can't display a dynamic range quite this dramatic, the viewing conditions for content production (ITU-R BT.2100) are designed around a maximum brightness of 1,000 cd/m² and a minimum brightness of 0.005 cd/m². It's undeniable that HDR content is capable of rendering an image much more faithfully to the original scene. High-contrast scenes become more impressive, like a streak of sunlight beaming into a dark room, while scenes like sunsets have a remarkable realism despite being on your living room TV.

|

| With HDR, the range of brightness that can be stored as data is expanded to cover from 0.0005 to 10,000 cd/m². This makes it possible to create a sense of contrast in how it's perceived by the human eye. |

|

| The difference between HDR for photos (compressed brightness curve) and HDR for video (expanded brightness range). |

One major step in accelerating the adoption of HDR in the imaging field is the evolution of hardware. Input devices like cameras already have a level of sensitivity that can significantly exceed SDR (0.05 to 100 cd/m²), and output displays have LCD backlighting that has evolved to go several times beyond the brightness needs of SDR images. With OLED displays, it should be simple to expand that range further.

As a result, a strong movement has suddenly developed behind the desire for display devices and transmission systems that reproduce image data from input devices with maximum reproducibility, rather than using the old SDR. As you know, HDR-compatible TVs continue to hit the market, and are available at virtually any home electronics store. Just how different is HDR video, really?

HDR Video: A Far More Impressive Difference than the Move to 4K or 8K Video!

Much has been written on the topic, but unlike technologies like 4K and 8K that can easily be understood just through numbers, HDR is difficult to fully grasp the impact of by simply reading about it. To put it another way though, when you see it for yourself, the difference between SDR and HDR is immediately unmistakable. For those who haven't had the opportunity to see it, allow me, the writer, to share a few thoughts I had about the ColorEdge PROMINENCE CG3145 HDR reference monitor from EIZO.

|

| ColorEdge PROMINENCE CG3145 |

The first impression I had at seeing various images displayed on the ColorEdge PROMINENCE CG3145 is that it really does have an incredible sense of realism. Until I saw this monitor in action, my mental image was fundamentally based on HDR photos, which are created by combining different ranges of brightness, so I was expecting something with somewhat oversaturated colors and things like that, but it was nothing like that — instead, my main impression was that shadows and highlights were rendered realistically. Because of how wide the range was, even fine details were properly reproduced, giving the image onscreen an incredible sense of depth. It had such a sense of realism that it felt as though the things shown were actually there.

I'd heard that the human eye was sensitive to tone gradations in dark areas, but seeing this firsthand was the biggest surprise. In the example I saw of city lights reflecting off the surface of water at night, the subtle movement of the water's surface was visible only in shadow, yet the city lights reflected right next to these shadows were bright and vivid without becoming washed out and white. Scenes like this, mixing deep shadows and bright highlights, are ordinarily difficult to reproduce, yet the ColorEdge PROMINENCE CG3145 did a spot-on job of showing them.

There was no need to compare it to an SDR display (even though there was actually one next to it), because the difference was immediately clear. Of course, the ColorEdge PROMINENCE CG3145 I had a chance to look at was a prototype – a reference monitor designed for professionals creating HDR content – so it may have been a more extreme example than, say, standard HDR-compatible TVs. Even so, if you're interested in experiencing an HDR view of the world, I think it's absolutely worth making a trip to your local home electronics store and taking a look at the HDR-compatible TVs on display.

|

| TV display at a mass retailer showing off 4K and HDR technologies. |

What Do You Need to Enjoy HDR Content?

It's clear now that HDR can provide an unprecedented image experience. What, then, does an ordinary user need in order to enjoy HDR?

First of all, your TV needs to be compatible with HDR10 signals. You should be able to tell if it is pretty easily based on whether it's marketed as being HDR-compatible. However, there are still 4K TVs on the market that aren't HDR-compatible, so make sure to check for that, at least.

There are also TVs that can accept these signals, but still have fairly low brightness and contrast ratios. Naturally, the higher these specs are, the more realistic the image can be, but for whatever reason a lot of TVs still don't list these openly. In that case, one thing you can look for is the Ultra HD Premium logo. To help prevent confusion on the part of consumers, the UHD Alliance established this certification for devices, distribution, and content. It stands as a guarantee that you'll be able to fully enjoy certified HDR content on certified HDR devices.

|

|

| The Ultra HD Premium logo, promoted by the UHD Alliance (left) and Sony 4K HDR logo (right). |

We won't go into the details of the various requirements that need to be met to acquire one of these logos, but among them are that displays must have a peak brightness of at least 1,000 cd/m² and black levels of 0.05 cd/m² or lower, or a peak brightness of at least 540 cd/m² and black levels of 0.0005 cd/m² or lower, for LCD and OLED displays respectively. One issue is that, because these and other requirements are actually fairly steep hurdles to overcome, certified products are, almost without exception, still fairly expensive.

Once you have an HDR-compatible display, next is HDR-compatible content. Though it depends on what type of content you want to watch, the key point here is the two formats: HDR10 and Dolby Vision. HDR10 is a standardized format for HDR content, and anything advertised as HDR-compatible can safely be regarded as being HDR10-compatible. On the other hand, Dolby Vision, as the name would suggest, is a separate HDR standard developed by Dolby. By increasing bit depth to 12 bits (compared to HDR10's 10 bits), the aim is to produce images with a stronger sense of reality and presence.

The most unique part of Dolby Vision, though, is its metadata format. HDR10 metadata is set for a given piece of content as a whole, while Dolby Vision has metadata settings for each frame, allowing brightness to be dynamically set for individual scenes. This allows Dolby Vision-compatible TVs to display content optimally, based on each TV's brightness performance.

Based on the current state of adoption of Dolby Vision, within Japan you can see Netflix, Hikari TV, and other streaming services adopting and providing Dolby Vision content. Naturally, devices themselves need to be Dolby Vision-compatible too, and as more TV models support the standard and long-awaited players are put on sale, the standard is expected to spread.

|

| Streaming services are now offering 4K/HDR-compatible content (Netflix pictured). |

In addition to TVs, video game consoles like the PlayStation 4 and Xbox One S also support HDR. The sense of realism produced by HDR can be applied to computer-generated graphics, just like it can with live-action content. If you think about it, HDR rendering is an all but obvious presence in the world of PC gaming. Though it's been impossible to avoid the dynamic range being spoiled by the limits of SDR, in summer of 2017, a few HDR-compatible PC displays were announced, and combined with the influence of TVs, it may lead to a rapid adoption of HDR.

|

| Home video game consoles are also HDR-compatible (Microsoft Xbox One X pictured). |

More Beautiful and More Realistic — The Five Elements Necessary for Increased Image Quality

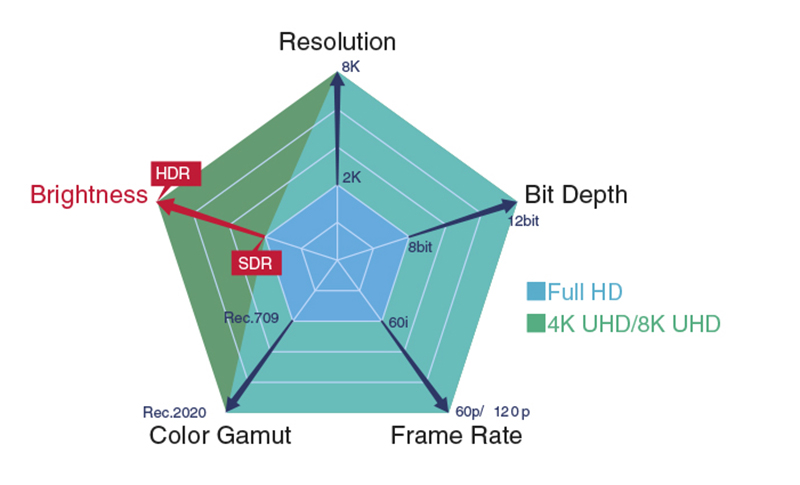

Here is how these technologies, toward more realistic images, are making such rapid advances. Broadly speaking, increased image quality involves five factors: resolution, bit depth, frame rate, color gamut, and brightness. Of these, the first three are all related to pixel density, and they have all gradually evolved over time. For a long time, color gamut has been at a standstill, but a few years ago the Rec. 2020 wide color gamut was established. That means that the only factor left is brightness, and HDR represents an evolution over the existing technology.

|

| The five elements of increased image quality. Of these, HDR represents an evolution of brightness. |

As mentioned earlier, by improving the display capabilities on the hardware side of TVs and other displays, the data collected by cameras can be displayed without compromise, creating a foundation for more realistic, more beautiful expression.

Based on these trends, EIZO has been working to develop the ColorEdge PROMINENCE CG3145 mentioned earlier (coming December 2017), an LCD display for pros who produce HDR content.

The ColorEdge PROMINENCE CG3145: Designed for Production Work in the Age of HDR

The ColorEdge PROMINENCE CG3145 uses an IPS LCD panel. Ordinarily, LCD displays — and particularly IPS displays — can't reproduce very deep blacks, giving them a relatively low contrast ratio. But there is a technology that can readily provide LCD displays with high contrast ratios: local dimming. This technology divides the direct-lit LED backlight into separate areas, adjusting the backlight’s brightness in each area based on the image being displayed, which causes fluctuations in brightness and color rendition.

|

|

| Local dimming enables fine adjustments of backlight brightness to reproduce higher-contrast scenes (left: local dimming; right: no local dimming). Local dimming technology tends to cause halos (blurring caused by light leakage) around areas with a major difference between light and dark, as seen on the left. |

In addition, areas with a major difference between light and dark tend to have “halos,” which is when the outlines of bright areas become blurry due to light leaking into areas that should be dark. These disadvantages might not be such a big deal in displays designed for playback, but they are certainly not desirable in a reference monitor being used for production work.

On the other hand, EIZO uses a new type of IPS LCD panel that, even without local dimming, can reduce its brightness to successfully produce blacks as dark as 0.005 cd/m² or even lower. As a result, its native contrast ratio is 1,000,000:1. Beyond that, another major advantage of the ColorEdge PROMINENCE CG3145 is its ability to maintain consistent brightness and color rendition for any type of image being worked on — many other reference monitors have a feature that occasionally reduces their brightness temporarily when displaying bright scenes, in order to extend the lifetime of the display.

|

| ColorEdge PROMINENCE CG3145 |

The ColorEdge PROMINENCE CG3145, which is designed to serve as a reference monitor for color grading work, is expected to be fairly expensive. Of course, there are also other steps of the content production workflow, from shooting to editing and compositing, that do not require quite as high a level of reproducibility. For those steps, EIZO offers an upgrade service to enable (pseudo) HDR support on their existing ColorEdge CG Series monitors, by adding Perceptual Quantization gamma curves (PQ1000 and PQ300) and HLG (Hybrid-Log Gamma) to their color modes, providing an HDR solution for producing streaming content and movies. This service comes recommended for creators interested in adding an HDR preview environment at a lower cost, to build a future HDR workflow.

The ColorEdge PROMINENCE CG3145 is no longer available.

See EIZO's latest HDR reference monitor here.